About ExaMobil

Sept 2023 - April 2024

ExaMobil is an autonomous robotic rover platform designed to enhance examination processes by combining the digital audit capabilities of ExaMap with cutting-edge robotics. ExaMap, a digital exam-audit platform, uses RFID tags and NFC sensors to create an integrated hardware-software solution that digitizes access control, check-ins, and logging, offering a scalable way to monitor examinee attendance and maintain detailed, auditable records for each exam. Building on this, ExaMobil provides a dynamic, rover-based platform that autonomously adapts to the examination venue, delivering in-exam services such as digital attendance, examinee support, and proctor assistance. This advanced system preserves examination data integrity and streamlines proctoring tasks, ensuring a secure and efficient exam environment.

Goals

Features

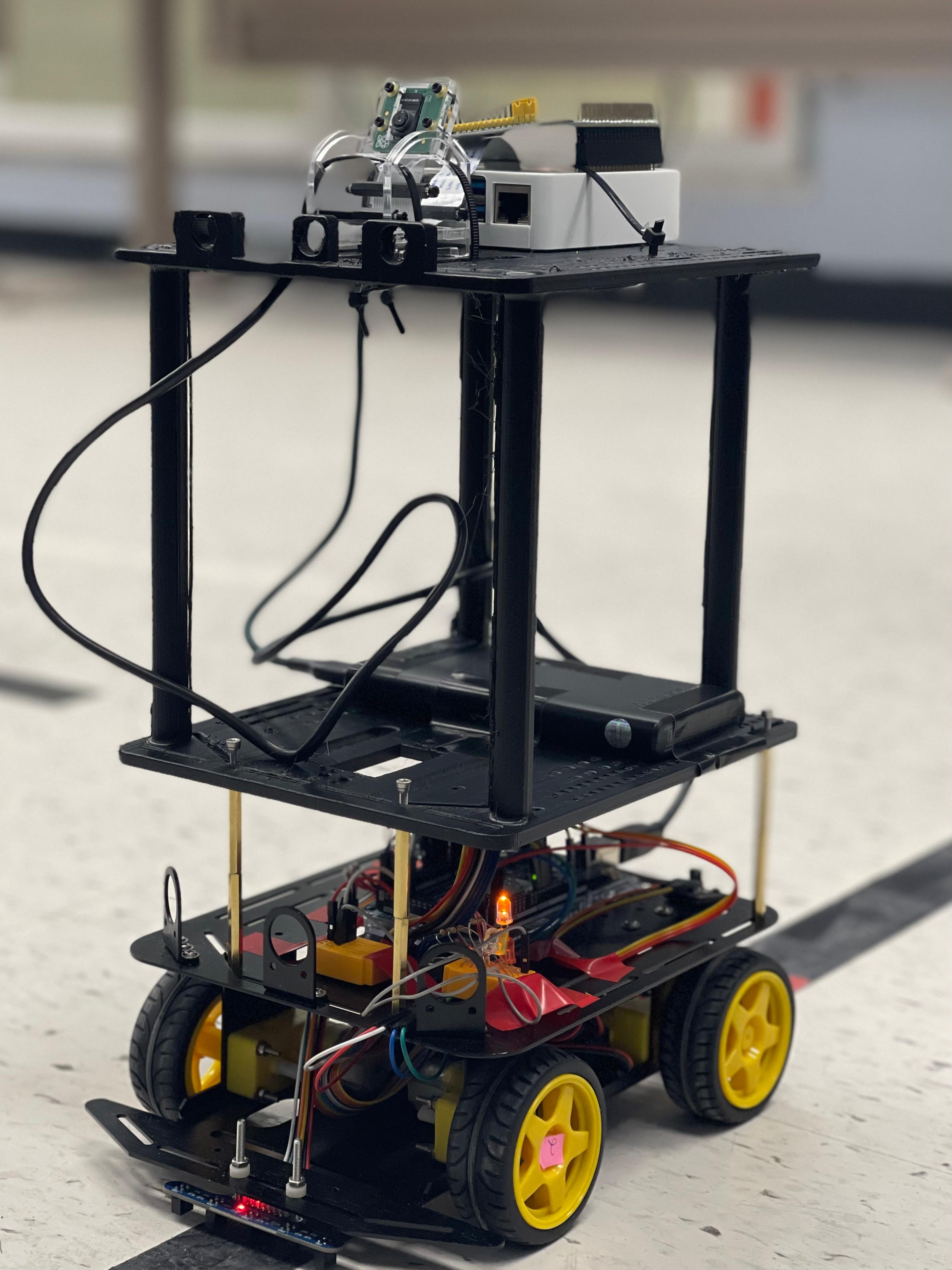

Hardware Design

Software Design

- Improve exam integrity by creating a secure and auditable exam environment.

- Automate and digitize the exam attendance and monitoring process.

- Provide support to examiners and proctors to streamline exam administration.

- Autonomously navigating the environment to deliver services where needed and adapting to various exam room layouts and conditions.

- Scale to support examinations with hundreds of students across multiple rooms, providing a consistent and reliable service regardless of exam size.

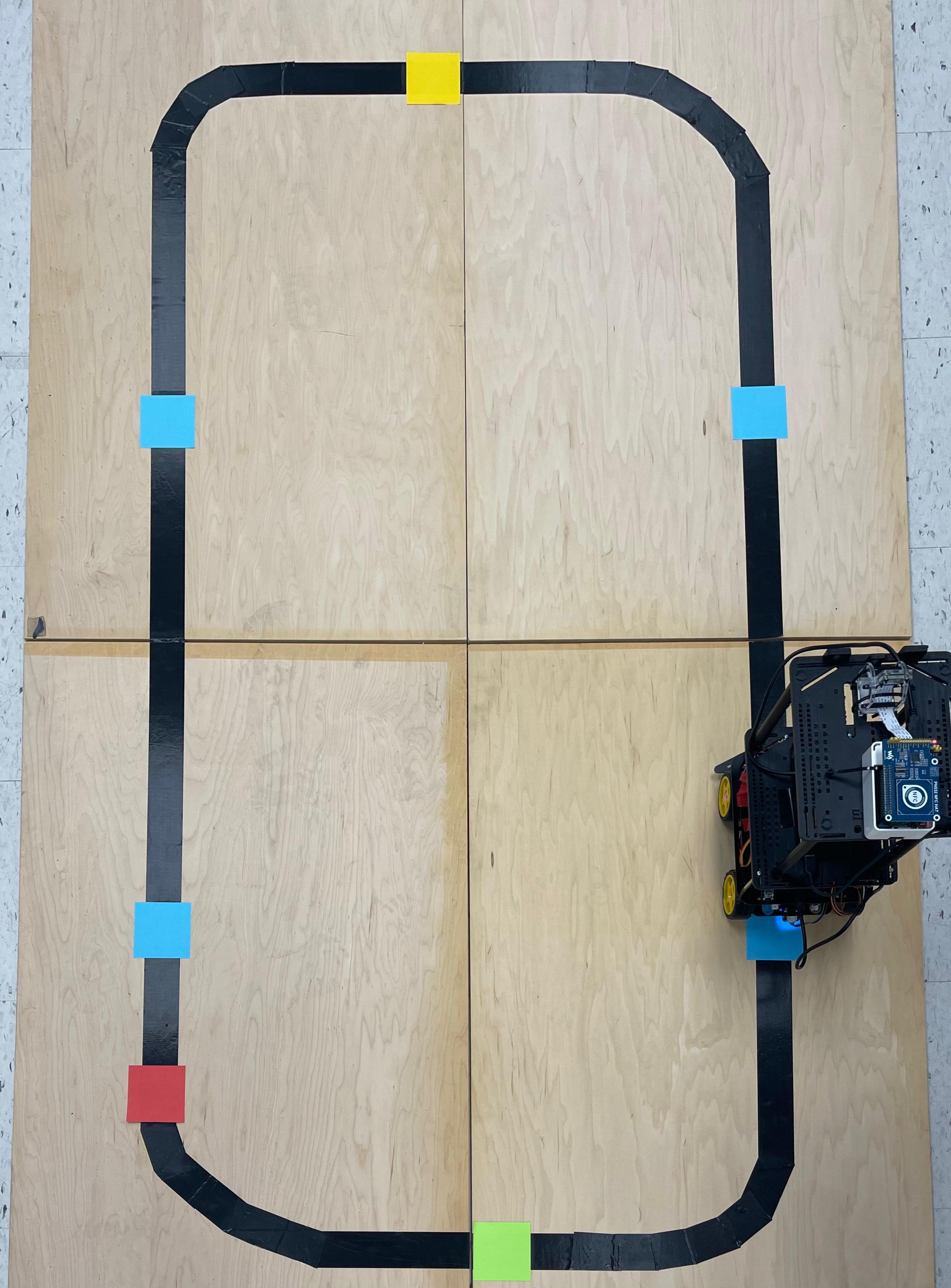

- Dynamic Environment Driving

The system is designed to operate in unfamiliar examination venues. By detecting and following a physical path on the floor, it can control its movements and continuously trace the path as it operates. This enables the rover to navigate any environment that includes the required path, without needing prior knowledge of the location. - Waypoint-based Vehicle Control and Response

The system can detect specific waypoints and key points along a fixed loop path while in motion. Using a combination of analog and optical sensors, it determines the rover's position and status, enabling it to deliver relevant services to examiners and students based on its current mode. - Gesture Detection & Recognition

Equipped with analog and optical sensors, the system can detect and recognize hand gestures within its range and field of view. This gesture recognition allows users to request services from the rover or to interact with its autonomous functions through simple hand signals. - Mode-controlled Service Behaviour

The system can operate in various modes, each defining specific behaviors and services provided by the rover. These modes can be set up during startup, through remote access, or controlled by buttons and gestures, allowing flexible operation to meet different needs. - Digital Organization of Exam Attendance

The system will create an electronic database to track each student's attendance status during an exam session. This data is presented on a clear, user-friendly dashboard for examiners and administrators to monitor in real-time. - Tap-based Check-in Check-out Process

Students can quickly check in and out of the exam by tapping their university RFID identification cards on the system's NFC readers, instantly updating their attendance status. - Information Security of Exam Materials

All exam-related data, including attendance, logs, and booklet IDs, is securely stored in the cloud using Google Firebase. This digital backup prevents the risks of lost or misrepresented information associated with paper records, as all interactions are digitally tracked and saved. - Reduced Procedural Time

The system minimizes the time and effort required for manual tasks, such as recording attendance and managing exam booklets. Examiners and teaching assistants benefit from faster and more efficient record-keeping, reducing administrative workload.

- Sensors and Perception

- • Line Follower Sensors: Detect and follow paths on the ground.

- • Color Sensor: Recognizes colored markers for navigation.

- • Infrared (IR) and Ultrasonic Sensors: Help avoid obstacles.

- • Camera: Captures visuals for advanced gesture recognition.

- User Interface

- • LED Indicators: Show the rover's status.

- • LCD Screen: Provides feedback and information to users.

- • Buttons and Audio Cues: Allow users to interact with the rover

- Control and Processing

- • Microcontroller: Acts as the rover's brain, processing sensor data and executing decisions.

- • Motor Control Algorithms: Software that guides motor actions based on sensor input.

- • Buttons and Audio Cues: Allow users to interact with the rover

- Mobility and Motion Control

- • Chassis: The main structure of the rover.

- • Wheels and Motors: Enable movement and directional control.

- • Motor Drivers: Control the speed and power of the motors.

- • Encoders: Provide feedback for precise movement control

- Path Tracking and Control

- • Line-Following Algorithm: Guides the rover along a predefined path using line follower and color sensors.

- Digital Exam Attendance

- • RFID and NFC Integration: Allows students to check in and out by tapping their ID cards

- • Solution Booklet Mapping: Uses QR codes to link each student's booklet with their attendance record.

- Gesture Recognition Sub-System

- • Hand Gesture Detection: Uses a camera to detect specific gestures for assistance requests.

- Administrative Dashboard

- • User Interface (UI): Displays real-time attendance and exam data for administrators.

- • Data Visualization: Shows a virtual layout of the exam room with status indicators.

- Cloud-Based Back-End

- • Google Firebase: Stores exam data securely in the cloud, allowing easy access and backup.

- • Real-Time Updates: Synchronizes data instantly, ensuring administrators have up-to-date information.

How it works

ExaMobil operates in a series of modes, each designed to support different tasks during an examination session. These modes run sequentially, ensuring a smooth and structured process for assisting students and examiners throughout the exam. Here's how each mode functions:

1. Learn & Map

At the beginning of the session, ExaMobil enters its startup mode, "Learn & Map." In this mode, the rover follows a predefined path around the examination venue, identifying key points and building a virtual map of the environment, including seating arrangements. This map serves as the foundation for all subsequent modes, creating necessary data and visual references to support the session.

2. Attendance & Booklet Capture

Once mapping is complete, ExaMobil shifts to "Attendance & Booklet Capture". In this mode, it follows its mapped path to each student's seat, where it activates the ExaMap system to allow students to record their attendance. This mode ensures that all attendance is logged accurately and linked to each student's exam booklet for streamlined tracking.

3. Student Support

For the remainder of the exam, ExaMobil runs in "Student Support" mode. It patrols the venue, scanning for gestures from students requesting assistance. When a student signals for help, the rover registers the request and alerts exam proctors via the administrative interface. This allows proctors to promptly assist the student, ensuring a smooth exam experience and reducing disruptions.

ExaMobil's multi-mode operation enables it to adapt to different tasks, from mapping the environment to supporting students, making it an effective, versatile solution for exam venues.

My Role

Sensor Systems Engineer and Web Developer

Designed and implemented a color sensor-based path-tracking system for an autonomous examination rover, delivering precise navigation and real-time adaptability alongside a user-friendly website to showcase functionalities.

- • Designed and Developed Color Sensor System: Implemented and integrated the color sensor system to enable the rover to detect and follow specific paths based on predefined color markers.

- • Created Path-Tracking Algorithm: Designed and optimized the algorithm to process color sensor input, ensuring precise path-following and autonomous navigation in examination environments.

- • Developed Functional Modules: Built robust functionality for real-time color recognition and dynamic response, enabling seamless adaptation to varied examination layouts.

- • Designed and Deployed Website: Developed an informative and user-friendly website to showcase ExaMobil's features, operating modes, and real-world applications, enhancing accessibility and visibility.

- • Ensured System Reliability: Conducted rigorous testing of the color sensor system and algorithm to validate performance under diverse conditions and ensure operational accuracy.

- • Collaborated Across Teams: Coordinated with hardware and software teams to align the sensor system with the rover's overall design and functionality, ensuring seamless integration.

- • Programming Languages: Utilized Python for algorithm and system development, JavaScript and HTML/CSS for website creation, PHP for server to deploy the website, and C for hardware-level programming.